Cybersecurity researchers have discovered a critical security flaw in an artificial intelligence (AI)-as-a-service provider Replicate that could have allowed threat actors to gain access to proprietary AI models and sensitive information.

“Exploitation of this vulnerability would have allowed unauthorized access to the AI prompts and results of all Replicate’s platform customers,” cloud security firm Wiz said in a report published this week.

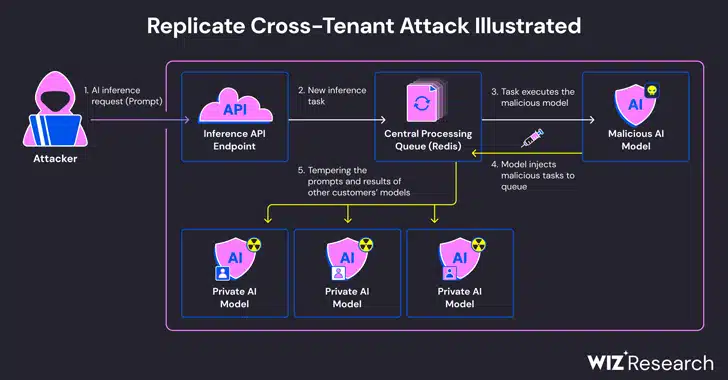

The issue stems from the fact that AI models are typically packaged in formats that allow arbitrary code execution, which an attacker could weaponize to perform cross-tenant attacks by means of a malicious model.

Replicate makes use of an open-source tool called Cog to containerize and package machine learning models that could then be deployed either in a self-hosted environment or to Replicate.

Wiz said that it created a rogue Cog container and uploaded it to Replicate, ultimately employing it to achieve remote code execution on the service’s infrastructure with elevated privileges.

“We suspect this code-execution technique is a pattern, where companies and organizations run AI models from untrusted sources, even though these models are code that could potentially be malicious,” security researchers Shir Tamari and Sagi Tzadik said.

The attack technique devised by the company then leveraged an already-established TCP connection associated with a Redis server instance within the Kubernetes cluster hosted on the Google Cloud Platform to inject arbitrary commands.

What’s more, with the centralized Redis server being used as a queue to manage multiple customer requests and their responses, the researchers found that it could be abused to facilitate cross-tenant attacks by tampering with the process in order to insert rogue tasks that could impact the results of other customers’ models.

These rogue manipulations not only threaten the integrity of the AI models, but also pose significant risks to the accuracy and reliability of AI-driven outputs.

“An attacker could have queried the private AI models of customers, potentially exposing proprietary knowledge or sensitive data involved in the model training process,” the researchers said. “Additionally, intercepting prompts could have exposed sensitive data, including personally identifiable information (PII).

The shortcoming, which was responsibly disclosed in January 2024, has since been addressed by Replicate. There is no evidence that the vulnerability was exploited in the wild to compromise customer data.

The disclosure comes a little over a month after Wiz detailed now-patched risks in platforms like Hugging Face that could allow threat actors to escalate privileges, gain cross-tenant access to other customers’ models, and even take over the continuous integration and continuous deployment (CI/CD) pipelines.

“Malicious models represent a major risk to AI systems, especially for AI-as-a-service providers because attackers may leverage these models to perform cross-tenant attacks,” the researchers concluded.

“The potential impact is devastating, as attackers may be able to access the millions of private AI models and apps stored within AI-as-a-service providers.”